In this article, you’ll learn six practical frameworks you can use to give your AI agents persistent memory to improve context, recall, and personalization.

Topics covered include:

- What “agent memory” means and why it matters for real-world assistants.

- Six frameworks for long-term memory, retrieval, and context management.

- Practical project ideas to help you experience agent memory in action.

Let’s get started.

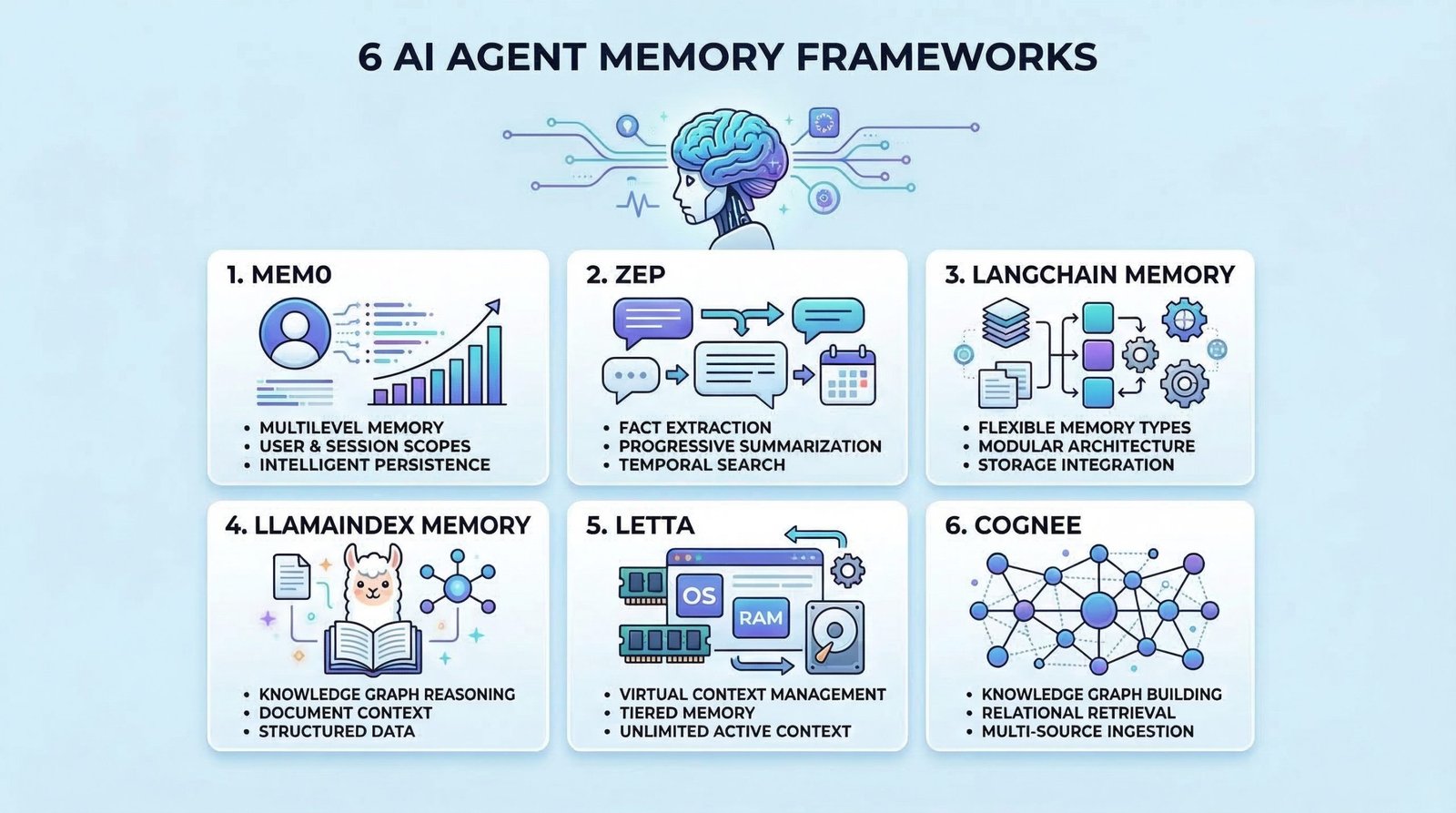

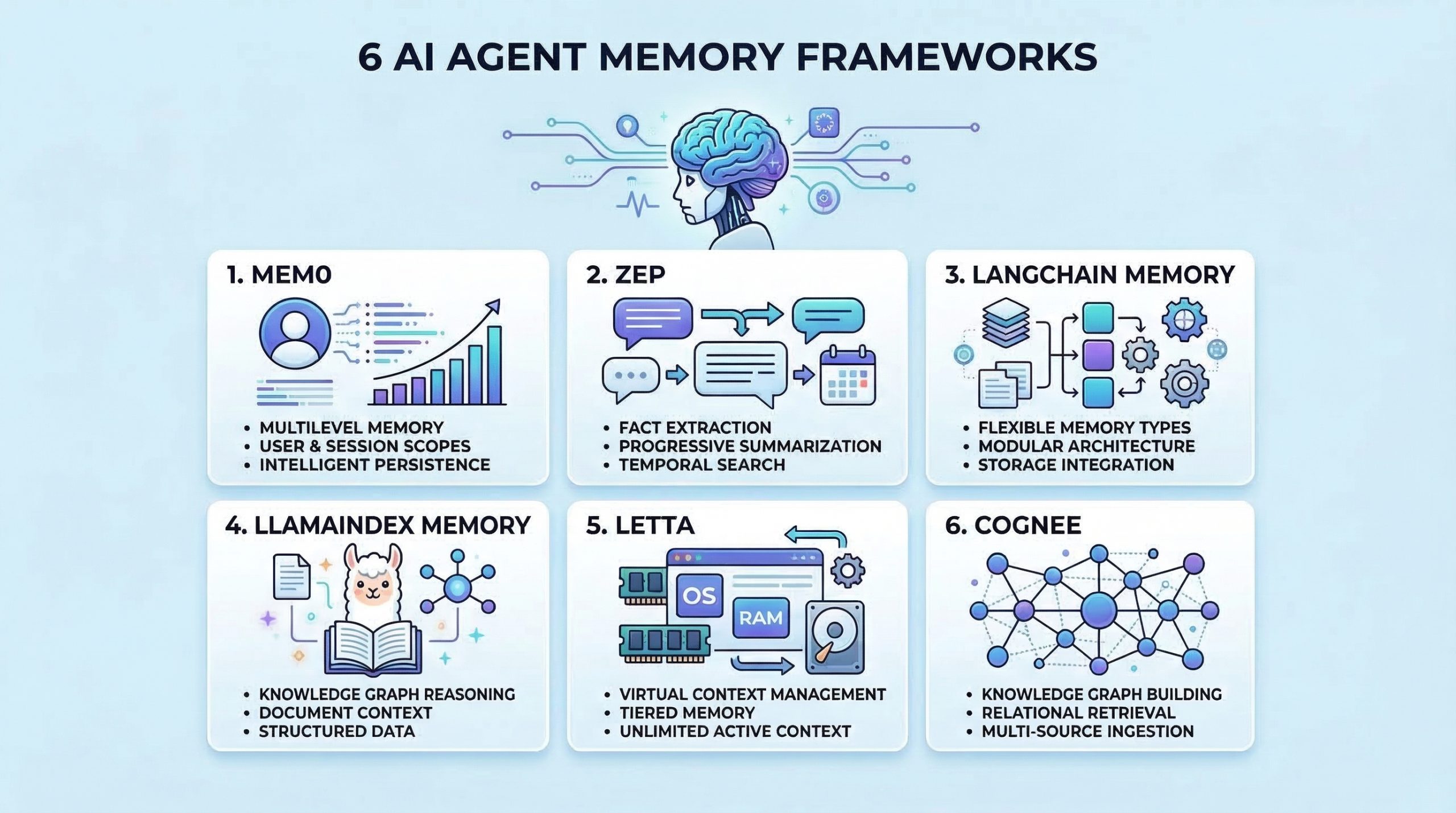

6 Best AI Agent Memory Frameworks to Try in 2026

Image by editor

introduction

memory is useful AI agent Evolve from stateless tools to intelligent assistants that learn and adapt. Without memory, agents cannot learn from past interactions, maintain context across sessions, or build knowledge over time. Implementing an effective memory system is also complicated by the need to handle storage, retrieval, summarization, and context management.

as AI engineer Building agents requires a framework that goes beyond simple conversation history. A suitable memory framework allows agents to memorize facts, recall past experiences, learn user preferences, and retrieve relevant context when needed. This article describes an AI agent memory framework that is useful for:

- Saving and retrieving conversation history

- Long-term factual knowledge management

- Implementation of semantic memory retrieval

- Handle context windows effectively

- Personalize agent behavior based on past interactions

Let’s take a look at each framework.

⚠️ Note: This article is not an exhaustive list, but rather an overview of the top frameworks in this field and is not ranked in any particular way.

1. Memory 0

memory 0 is a dedicated memory layer for AI applications that provides intelligent and personalized memory capabilities. It is specifically designed to give the agent long-term memory that persists between sessions and evolves over time.

Mem0 is a good agent memory because:

- Extract and save relevant facts from conversations

- Provides multi-level memory supporting user-level, session-level, and agent-level memory scoping

- Achieve semantic and accurate hybrid memory retrieval using vector search combined with metadata filtering.

- Built-in memory management and memory versioning

Please start from Mem0 quickstart guidethen explore Memory type and Mem0 memory filter.

2. Zep

sepp is a long-term memory store designed specifically for conversational AI applications. It focuses on extracting facts, summarizing conversations, and efficiently providing relevant context to agents.

Why Zep is great at conversational memory:

- Extract entities, intent, and facts from conversations and store them in a structured format

- Provides progressive summaries that summarize long conversation histories while retaining important information

- It provides both semantic and temporal retrieval, allowing agents to search memories based on meaning or time.

- Supports session management with automatic context construction, providing agents with a memory associated with each interaction

Please start from quick start guide Please refer to Zep Cookbook Practical example page.

3. Lang chain memory

rung chain Includes comprehensive memory module It offers different memory types and strategies for different use cases. It is highly flexible and seamlessly integrates with the broader LangChain ecosystem.

Here’s why LangChain memory is valuable for agent applications:

- It provides multiple memory types such as conversation buffer, summary, entity, and knowledge graph memory for different scenarios.

- Supports memory backed by a variety of storage options, from simple in-memory stores to vector and traditional databases

- Provides memory classes that can be easily swapped and combined to create hybrid memory systems.

- Natively integrates with chains, agents, and other LangChain components for consistent memory handling.

Memory Overview – Docs by LangChain We’ve got everything you need to get started.

4. LlamaIndex memory

llama index provide memory function Integrated with data frameworks. This makes it especially powerful for agents that need to remember and reason about structured information and documents.

Why LlamaIndex Memory is useful for knowledge-intensive agents:

- Combine chat history and document context to help agents remember both the conversation and the information referenced.

- Provides composable memory modules that work seamlessly with LlamaIndex’s query engine and data structures

- Supports memory with vector stores and enables semantic search of past conversations and retrieved documents

- Handles context window management and optionally compresses or retrieves relevant history.

Memory for LlamaIndex is a comprehensive overview of short-term and long-term memory from LlamaIndex.

5. Retta

Retta We take inspiration from operating systems to manage LLM contexts and implement a virtual context management system that intelligently moves information between the immediate context and long-term storage. This is one of the most unique approaches to solving memory problems in AI agents.

Why Letta is great for context management:

- It uses a hierarchical memory architecture that mimics the OS memory hierarchy, with RAM as the main context and external storage as disk.

- Allows agents to control memory through function calls to read, write, and archive information

- Handles context window limitations by intelligently exchanging information in and out of the active context

- It is ideal for long-running conversational agents because it allows the agent to maintain virtually unlimited memory despite the fixed constraints of the context window.

Introducing Retta is a good starting point. then you can see core concept and LLM as an Operating System: Agent Memory with DeepLearning.AI.

6. Cogney

cogney is an open-source memory and knowledge graph layer for AI applications that precisely structure, connect, and retrieve information. It is designed to enable agents to dynamically and queryably understand data (not just stored text, but also interconnected knowledge).

Here’s why Cognee is better at remembering agents:

- Build a knowledge graph from unstructured data, allowing agents to infer relationships rather than retrieving isolated facts.

- Supports multi-source ingestion including documents, conversations, and external data to consolidate memory across diverse inputs

- It combines graph traversal and vector search to achieve understandable searches. how Concepts are related, not how similar they are

- It includes a pipeline for continuous memory updates, evolving the knowledge as new information flows in.

Please start from quick start guide and then move on Setup configuration To get started.

summary

The frameworks described here offer different approaches to solving memory challenges. To gain hands-on experience with agent memory, consider building some of the following projects.

- Create a personal assistant with Mem0 that learns your preferences and remembers past conversations across sessions

- Build customer service agents that remember customer history and provide personalized support using Zep.

- Develop a research agent with LangChain or LlamaIndex Memory that remembers both conversations and analyzed documents.

- Design a long-context agent with Letta that handles conversations beyond the standard context window

- Build a persistent customer intelligence agent with Cognee. The agent builds and evolves a structured memory graph of each user’s history, preferences, interactions, and behavioral patterns to provide highly personalized, context-aware support throughout long-term conversations.

Happy building!